Losses = F.cross_entropy(prediction, target_expanded, reduction='mean')Īcc_k = _accuracy(p, target, topk=self._topk) P = F.softmax(prediction, dim=1).mean(dim=2) Loss = F.cross_entropy(prediction, target_expanded, reduction='mean') If self.label_trick is False or reset_kld=1: Prediction = prediction_mean + torch.sqrt(prediction_variance) * normals Normal_dist = (torch.zeros_like(prediction_mean), torch.ones_like(prediction_mean)) Target_expanded = target.unsqueeze(dim=1).expand(-1, samples) Prediction_variance = output_dict.unsqueeze(dim=2).expand(-1, -1, samples) Prediction_mean = output_dict.unsqueeze(dim=2).expand(-1, -1, samples) rged_training = rged_trainingĭef forward(self, output_dict, target_dict): Self.label_trick_valid = args.label_trick_valid Super(ClassificationLossVI, self)._init_() _, pred = output.topk(maxk, 1, True, True)Ĭorrect_k = correct.reshape(-1).float().sum(0, keepdim=True) e_deterministic_algorithms(True, warn_only=True)ĭef _accuracy(output, target, topk=(1,)): It is a Bayesian Neural network used for continual learning and the loss is the ELBO loss.

This is the module by which I calculate the loss. So, the code snipper I tried does not reproduce the code but when I run my original code I get the error. These are the exact warning messages that I get when I run my code. ~/anaconda3_new/envs/first_env/lib/python3.9/site-packages/torch/autograd/ init.py:173: UserWarning: scatter_add_cuda_kernel does not have a deterministic implementation, but you set ‘e_deterministic_algorithms(True, warn_only=True)’. Return torch._C._nn.cross_entropy_loss(input, target, weight, _Reduction.get_enum(reduction), ignore_index, label_smoothing) (Triggered internally at /opt/conda/conda-bld/pytorch_1659484806139/work/aten/src/ATen/Context.cpp:82.) GitHub to help us prioritize adding deterministic support for this operation.UserWarning: nll_loss2d_forward_out_cuda_template does not have a deterministic implementation, but you set ‘e_deterministic_algorithms(True, warn_only=True)’. What do you think? I appreciate any hints and intuitions. My intuition is that the culprit here is the non-deterministic loss and the large lr is just a catalyzer. However, when the learning_rate is reduced by a factor of 10 (=0.01), I see that the gap disappears. What I have observed is that, when I use a large learning_rate (=0.1), I cannot reproduce my results and I see huge gaps. This is the only possible source of randomness I am aware of.

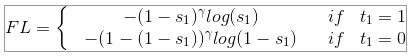

I need to add that I use XE loss and this is not a deterministic loss in PyTorch. I have faced some reproducibility issues even when I have the same seed and I set the following code: # python I have a Bayesian neural netowrk which is implemented in PyTorch and is trained via a ELBO loss.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed